In January 2005, I wrote my first blog entry. b2Evolution was my tool of choice to manage my blog — it was free, simple to install, and more than adequate for my needs. I’d never heard of WordPress back then, though it shares a common origin with b2Evolution – they were both forks of b2/cafelog, one of the original blogging systems.

Fast forward ten years, and WordPress rules the world. I’ve used it on countless projects for clients and friends, and it’s an extremely flexible and powerful CMS. I’m also now far more familiar and comfortable using it than I ever was with b2Evolution.

Which is why, finally, I’ve migrated this blog to the latest version of Wordpress, version 4.1. (If you’re wondering, the photo in the banner is Pan’s Rock in Ballycastle, Co Antrim, taken last April. Here are some more Antrim photos from the same trip.)

For those interested, the nitty gritty steps required are below; everyone else can stop reading now.

Database Migration

The biggest challenge was transferring the existing blog contents from b2Evolution’s database to WordPress’s database. Since many bloggers have travelled this path in the past, I expected this to be straightforward. However, most of them appeared to (a) be running a much newer version of b2evolution than me, and (b) have made the move long ago, to a much older version of WordPress.

No matter. First step was to find a script close to what I needed, in this case a script called import-b2evolution-wp2.php.txt at themikecam.com, referenced by Christian Cawley’s helpful b2evolution migration guide. Unfortunately, themikecam.com is no longer online, but luckily, archive.org still has a copy of the most recent import-b2evolution-wp2.php.txt available for download, along with all the older copies.

Although it should go without saying, now is a good time to backup your b2evolution database! Just in case…

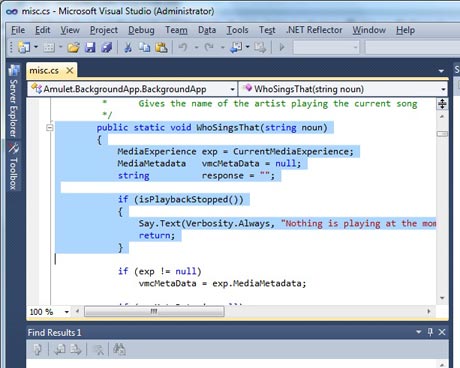

While the script didn’t work right away, it was certainly a good start. I made a few tweaks to it and managed to get it working properly on my installation. You can download my copy here – make sure to remove the trailing .txt suffix after downloading.

I installed WordPress as usual, specifically WordPress 2.7 from the WordPress archives since I wanted a fairly old version. I configured it to use b2evolution’s database — Wordpress uses different table names, so they don’t conflict with each other. Plus, the migration script expects this, so you don’t really have a choice.

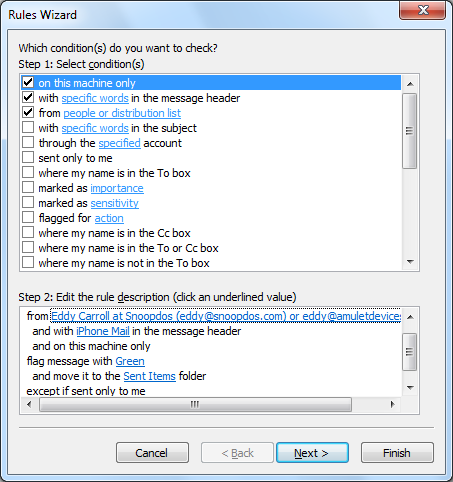

Next, I uploaded the migration script to my WordPress wp-admin folder, then invoked it directly (e.g. http://yourblogaddress/wp-admin/import-b2evolution-wp2.php) and filled in the relevant values in the form presented.

It took me a couple of goes to get it right, so after the first failure, I installed the WordPress Reset plug-in; this makes it very easy to reset the WordPress database ready for another try, without having to do a full WordPress re-install, and without altering the b2evolution entries.

I highly recommend checking your database with phpAdmin afterwards to make sure the posts appear correct!

Even with the script, I still had to manually update the categories – my version of the script didn’t migrate them across properly. Since I only had 100 entries or so, it was easy enough to sort them on b2Evolution using phpAdmin. I could then select multiple posts by hand in WordPress and assign them to each category using the bulk update option.

(If I’d had many more posts, I might have spent some more effort getting the category migration working correctly.)

Finally, once I was confident everything was working okay, I updated WordPress from 2.7 to 4.1, which is MUCH nicer.

And all done!

Legacy URL support

Well, not quite done it turned out. There are plenty of links out there to my old b2evolution posts, and it would be nice if they could magically redirect to the new WordPress equivalent, to keep both the search engines and users happy.

This turns out to require a little .htaccess magic, and some PHP scripting. I added the following to WordPress’s .htaccess (I’ve reproduced the entire file for reference):

# BEGIN WordPress

RewriteEngine On

RewriteBase /blog/

# Check for references to the old b2evolution blog and send them

# to our redirect script where they'll be properly handled.

#

RewriteRule b2redirect.php - [L]

RewriteCond %{QUERY_STRING} ^(m=|.*cat=|.*blog=5|.*author=|pb=1|title=)

RewriteRule .* /blog/b2redirect.php [L,R=301]

# Normal WordPress rules

RewriteRule ^index\.php$ - [L]

RewriteCond %{REQUEST_FILENAME} !-f

RewriteCond %{REQUEST_FILENAME} !-d

RewriteRule . /blog/index.php [L]

# END WordPress

(Watch out for word wrap on the QUERY_STRING line – the bracketed items are part of the same line.)

Essentially, this says that any query strings passed in to the blog of the form m=xxx (date reference), cat=xxx (category reference), blog=5 (my old Blog’s internal ID), author=xxx (show author posts), title=xxx (title reference) or pb=xx (b2evolution specific) should be directed to my custom script b2redirect.php without further ado and everything else should be handled by WordPress as usual.

(We use a 301 Redirect to indicate to browsers and search engines that this is a permanent redirection, and the new URL should be used in future.)

I learnt a couple of useful things about mod_rewrite figuring this out. I hadn’t fully appreciated that RewriteRules can only match against physical disk filenames from the URL; if you need to match parameter names or values, you must use RewriteCond in conjunction with the QUERY_STRING parameter.

And of course, I got caught out by having the parameters in my redirected URL immediately trigger another redirect when the page was refetched, until eventually it gave up. This is why the very first rule says that references to b2redirect.php should be passed through without any rewriting at all.

So what is b2redirect.php? It’s a small script I wrote that interprets the old b2Evolution parameters and figures out a WordPress equivalent. Here it is:

<?php

// Redirect b2evolution blog URLs to WordPress

$baseurl = "http://www.snoopdos.com/blog";

$catmap = array();

$catmap[14] = "observation";

$catmap[15] = "technology";

$catmap[16] = "random-thoughts";

$catmap[17] = "networking";

$catmap[18] = "windows";

$catmap[19] = "rant";

$catmap[20] = "useful-links";

$title = $_GET["title"];

$m = $_GET["m"];

$cat = $_GET["cat"];

$author = $_GET["author"];

// Set default URL

$url = "$baseurl/";

if (!empty($title) && !strpos($title, ":"))

{

$url = "$baseurl/$title";

}

else if (!empty($cat) && !empty($catmap[$cat]))

{

$url = "$baseurl/category/$catmap[$cat]";

}

else if (!empty($m) && (strlen($m) == 4 || strlen($m) == 6))

{

$year = substr($m, 0, 4);

$month = substr($m, 4, 2);

if ($year >= 2005 && $year <= 2013)

{

$url = "$baseurl/$year/";

if (strlen($month) > 0)

$url .= "$month/";

}

}

else if (!empty($author))

{

$url = "$baseurl/author/eddy/";

}

// Now issue the permanent redirect to the new location

header("HTTP/1.1 301 Moved Permanently");

header("Location: $url");

?>

Once again, the categories needed some special handling. Otherwise, it was straightforward – month references get changed to WordPress archive format (year/month); titles are mapped to the equivalent WordPress direct URL; category numbers go to the new WordPress equivalent name; author references show the WordPress author page; and everything else goes to the home page of the blog – better than a 404 Page Not Found.

So that’s that job done. Now let’s see what the next 10 years brings…

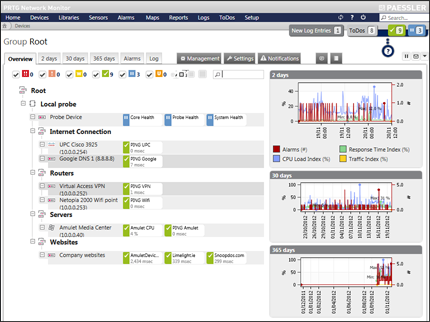

There are many reasons for choosing Snoopdos. Our team of experts combines highly communicative people with varied skills in a wide range of sectors which means we have a wealth of knowledge and creativity to offer you. We have collaborated internationally with great success. Our scope spans all kinds of projects for companies of all sizes. We can devise a program with which to achieve your goals. Take your business to the next level with our help!

There are many reasons for choosing Snoopdos. Our team of experts combines highly communicative people with varied skills in a wide range of sectors which means we have a wealth of knowledge and creativity to offer you. We have collaborated internationally with great success. Our scope spans all kinds of projects for companies of all sizes. We can devise a program with which to achieve your goals. Take your business to the next level with our help!